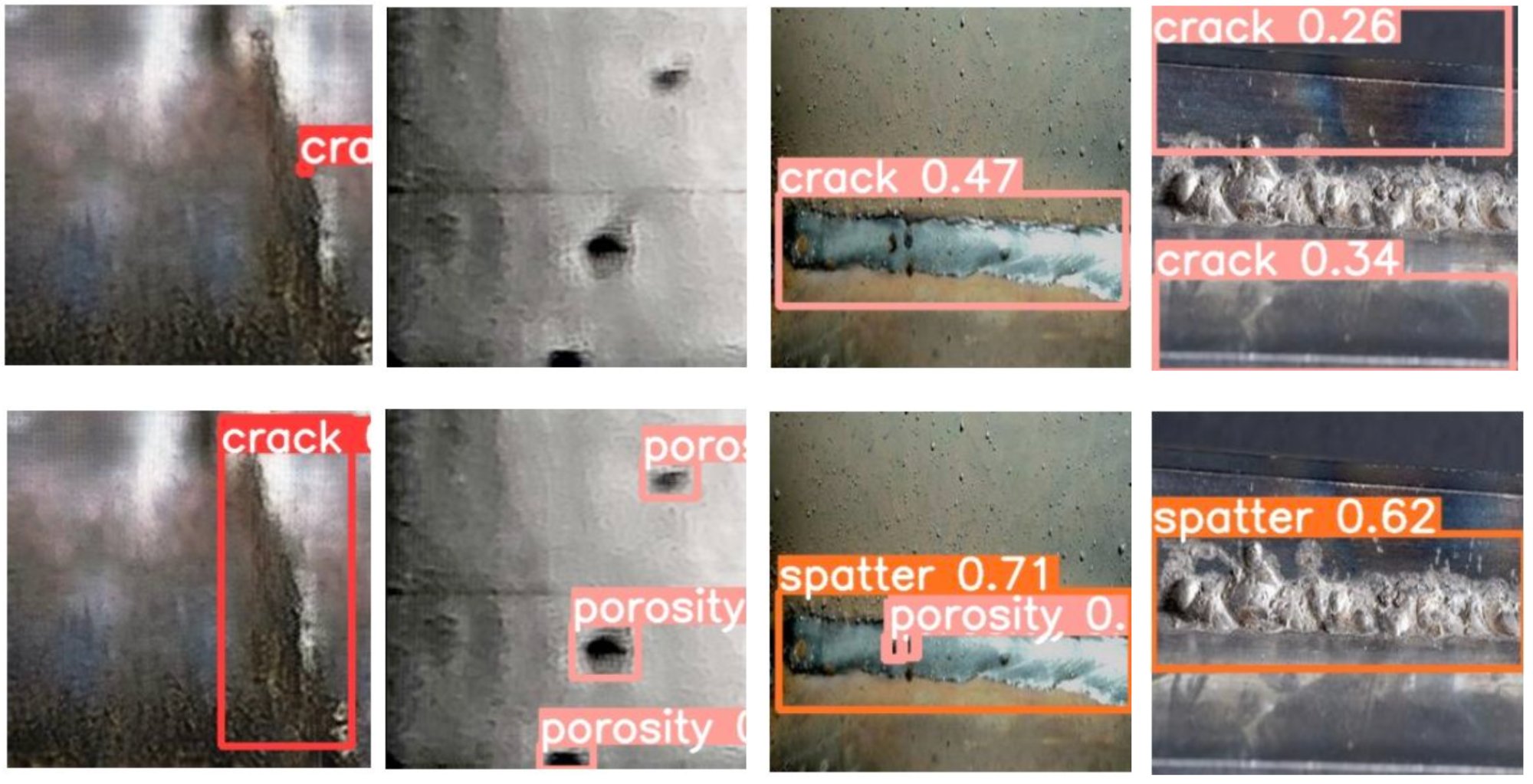

Welding is a critical manufacturing process where defects can degrade product performance or, in severe cases, cause safety hazards. Traditional inspection relies on visual examination and non-destructive testing — methods that are time-consuming, costly, and highly dependent on the inspector's individual experience and skill.

Deep learning offers a path toward automated defect detection, but training such models requires large, high-quality datasets. In welding, this data simply does not exist publicly: real defect images are restricted due to security concerns and the high cost of collecting them in production environments.